memsearch

by zilliztech

A Markdown-first memory system, a standalone library for any AI agent. Inspired by OpenClaw.

1,188stars

110forks

Python

Added 2/13/2026

AI Agentsagentagent-memoryai-agentsclaude-codeclaude-code-pluginclawdbotembeddingsharnesshybrid-searchlong-term-memorymemorymilvusopenclawopencodeprogressive-disclosureragrerankersemantic-searchskills

Installation

# Add to your Claude Code skills

git clone https://github.com/zilliztech/memsearchWhy memsearch?

- 🌐 All Platforms, One Memory — memories flow across Claude Code, OpenClaw, OpenCode, and Codex CLI. A conversation in one agent becomes searchable context in all others — no extra setup

- 👥 For Agent Users, install a plugin and get persistent memory with zero effort; for Agent Developers, use the full CLI and Python API to build memory and harness engineering into your own agents

- 📄 Markdown is the source of truth — inspired by OpenClaw. Your memories are just

.mdfiles — human-readable, editable, version-controllable. Milvus is a "shadow index": a derived, rebuildable cache - 🔍 Progressive retrieval, hybrid search, smart dedup, live sync — 3-layer recall (search → expand → transcript); dense vector + BM25 sparse + RRF reranking; SHA-256 content hashing skips unchanged content; file watcher auto-indexes in real time

🧑💻 For Agent Users

Pick your platform, install the plugin, and you're done. Each plugin captures conversations automatically and provides semantic recall with zero configuration.

For Claude Code Users

# Install

/plugin marketplace add zilliztech/memsearch

/plugin install memsearch

# Restart Claude Code to activate the plugin

After restarting, just chat with Claude Code as usual. The plugin captures every conversation turn automatically.

Verify it's working — after a few conversations, check your memory files:

ls .memsearch/memory/ # you should see daily .md files

cat .memsearch/memory/$(date +%Y-%m-%d).md

Recall memories — two ways to trigger:

/memory-recall what did we discuss about Redis?

Or just ask naturally — Claude auto-invokes the skill when it senses the question needs history:

We discussed Redis caching before, what was the TTL we chose?

For OpenClaw Users

# Install from ClawHub

openclaw plugins install clawhub:memsearch

openclaw gateway restart

After installing, chat in TUI as usual. The plugin captures each turn automatically.

Verify it's working — memory files are stored in your agent's workspace:

# For the main agent:

ls ~/.openclaw/workspace/.memsearch/memory/

# For other agents (e.g. work):

ls ~/.openclaw/workspace-work/.memsearch/memory/

Recall memories — two ways to trigger:

/memory-recall what was the batch size limit we set?

Or just ask naturally — the LLM auto-invokes memory tools when it senses the question needs history:

We discussed batch size limits before, what did we decide?

// In ~/.config/opencode/opencode.json

{ "plugin": ["@zilliz/memsearch-opencode"] }

After installing, chat in TUI as usual. A background daemon captures conversations.

Verify it's working:

ls .memsearch/memory/ # daily .md files appear after a few conversations

Recall memories — two ways to trigger:

/memory-recall what did we discuss about authentication?

Or just ask naturally — the LLM auto-invokes memory tools when it senses the question needs history:

We discussed the authentication flow before, what was the approach?

# Install

bash memsearch/plugins/codex/scripts/install.sh

codex --yolo # needed for ONNX model network access

After installing, chat as usual. Hooks capture and summarize each turn.

Verify it's working:

ls .memsearch/memory/

Recall memories — use the skill:

$memory-recall what did we discuss about deployment?

⚙️ Configuration (all platforms)

All plugins share the same memsearch backend. Configure once, works everywhere.

Embedding

Defaults to ONNX bge-m3 — runs locally on CPU, no API key, no cost. On first launch the model (~558 MB) is downloaded from HuggingFace Hub.

memsearch config set embedding.provider onnx # default — local, free

memsearch config set embedding.provider openai # needs OPENAI_API_KEY

memsearch config set embedding.provider ollama # local, any model

All providers and models: Configuration — Embedding Provider

Milvus Backend

Just change milvus_uri (and optionally milvus_token) to switch between deployment modes:

Milvus Lite (default) — zero config, single file. Great for getting started:

# Works out of the box, no setup needed

memsearch config get milvus.uri # → ~/.memsearch/milvus.db

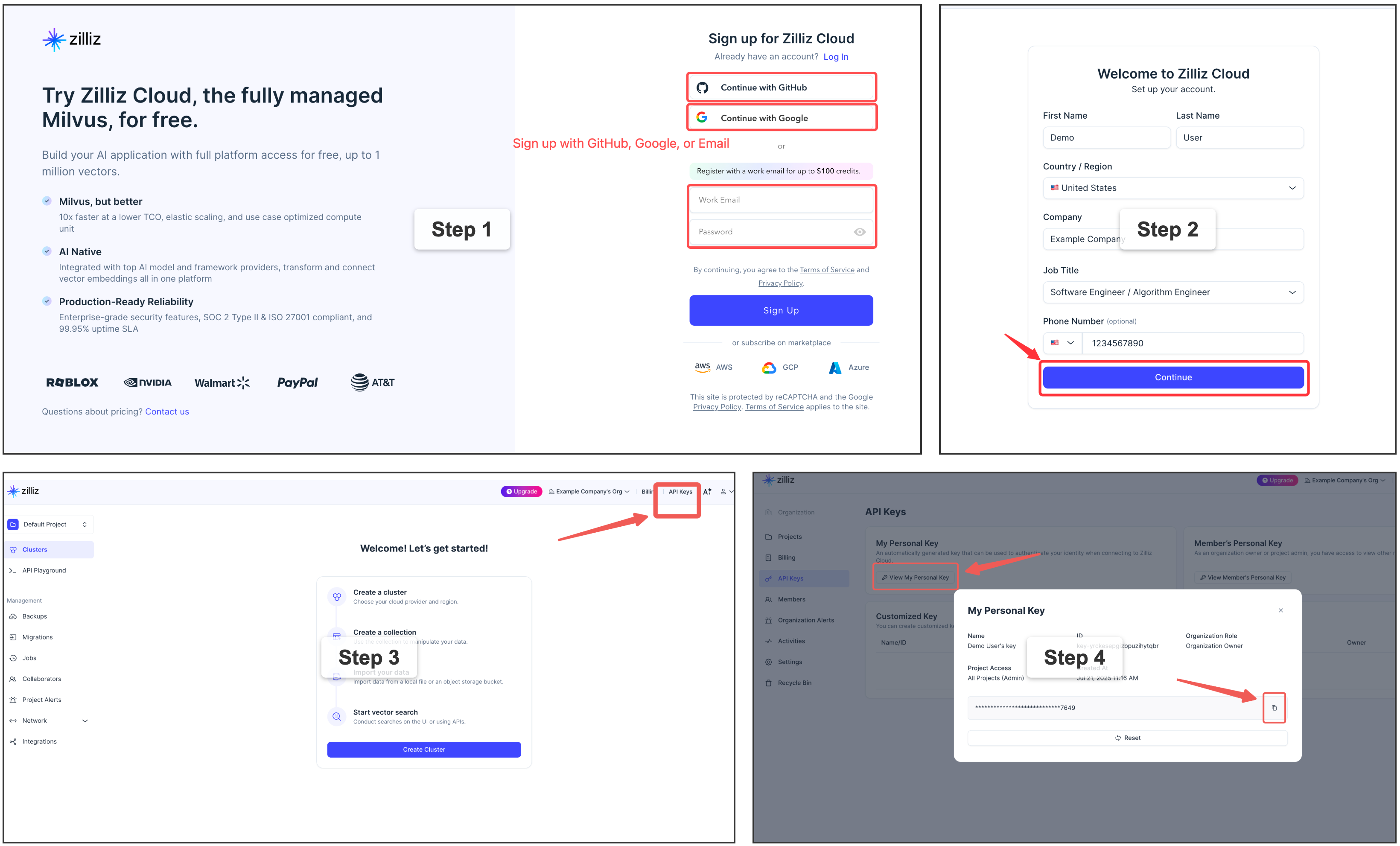

⭐ Zilliz Cloud (recommended) — fully managed, free tier available — sign up 👇:

memsearch config set milvus.uri "https://in03-xxx.api.gcp-us-west1.zillizcloud.com"

memsearch config set milvus.token "your-api-key"

You can sign up on Zilliz Cloud to get a free cluster and API key.

For multi-user or team environments with a dedicated Milvus instance. Requires Docker. See the official installation guide.

memsearch config set milvus.uri http://localhost:19530

📖 Full configuration guide: Configuration · Platform comparison

🛠️ For Agent Developers

Beyond ready-to-use plugins, memsearch provides a complete CLI and Python API for building memory into your own agents. Whether you're adding persistent context to a custom agent, building a memory-augmented RAG pipeline, or doing harness engineering — the same core engine that powers the plugins is available as a library.

🏗️ Architecture Overview

┌──────────────────────────────────────────────────────────────┐

│ 🧑💻 For Agent Users (Plugins) │

│ ┌──────────┐ ┌──────────┐ ┌──────────┐ ┌────────┐ ┌──────┐ │

│ │ Claude │ │ OpenClaw │ │ OpenCode │ │ Codex │ │ Your │ │

│ │ Code │ │ Plugin │ │ Plugin │ │ Plugin │ │ App │ │

│ └────┬─────┘ └────┬─────┘ └────┬─────┘ └───┬────┘ └──┬───┘ │

│ └─────────────┴────────────┴───────

Comments (0)

to leave a comment.

No comments yet. Be the first to share your thoughts!

Related Skills

The agent harness performance optimization system. Skills, instincts, memory, security, and research-first development for Claude Code, Codex, Opencode, Cursor and beyond.

151,568

23,507

JavaScript

AI Agentsai-agentsanthropic

An open-source AI agent that brings the power of Gemini directly into your terminal.

100,971

13,050

TypeScript

AI Agentsaiai-agents

An AI SKILL that provide design intelligence for building professional UI/UX multiple platforms

63,135

6,326

Python

CLI Toolsai-skillsantigravity

A curated list of awesome Claude Skills, resources, and tools for customizing Claude AI workflows

53,037

5,671

Python

AI Agentsagent-skillsai-agents

Bash is all you need - A nano claude code–like 「agent harness」, built from 0 to 1

51,795

8,466

TypeScript

AI Agentsagentagent-development